CodeShuffler

Role: Initial Developer of Prototype. Then supervised v2.0.

Tools and skills used: Python, Pandas, Numpy.

Organization: UConn

Released in: Spring 2026

About:

With the rapid advancement of generative AI, students enrolled in introductory programming courses increasingly achieve high scores on automated assessments yet struggle to demonstrate fundamental concepts, such as a simple for loop, on paper. It has become evident that many students rely heavily on generative AI tools to complete programming assignments, resulting in near-perfect scores on auto-graded platforms without a corresponding depth of conceptual understanding. This challenge highlighted the need for a transformative, authentic, and scalable assessment approach that enables robust, measurable evaluation of student learning outcomes in paper-based environments.

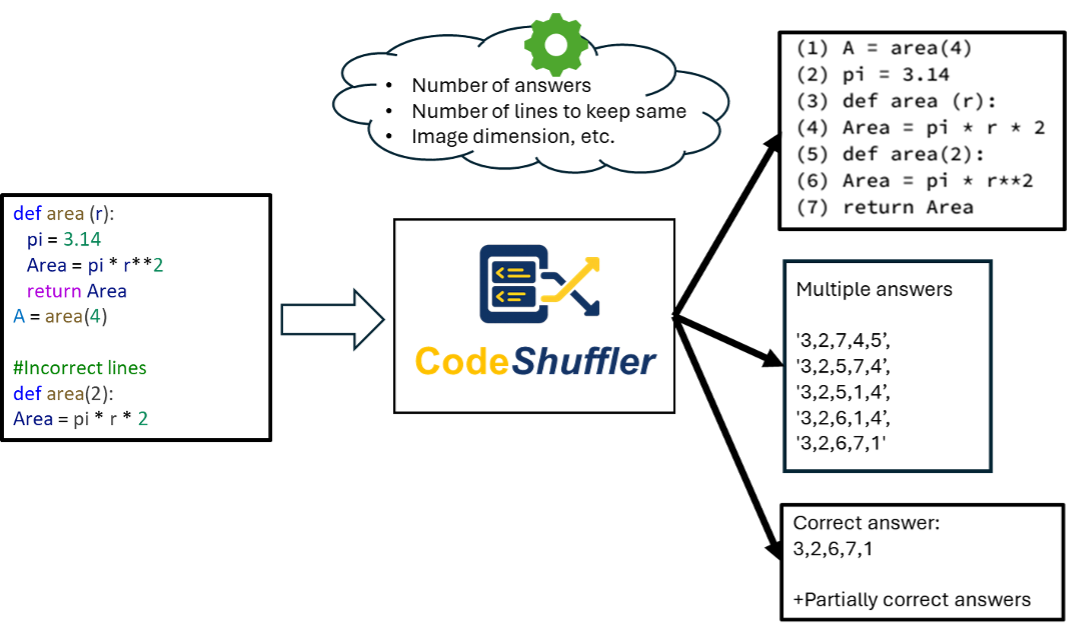

To address this issue, I developed CodeShuffler, a highly original teaching innovation designed to support equitable and authentic assessment of programming skills while significantly reducing instructors' grading workload. CodeShuffler takes an original code snippet as input (Figure D5), shuffles its lines, converts the output into an image, and generates a specified number of multiple-choice options that include one correct sequence of code elements. The tool also identifies the correct answer automatically. Multiple versions of the same problem can be generated by running the code multiple times, ensuring that students sitting near one another receive equivalent but distinct assessments. Importantly, CodeShuffler supports code written in any programming language, making it highly scalable and easily replicable across courses, departments, and institutional contexts.

To improve accessibility and usability for instructors across all disciplines, CodeShuffler v2.0 is recently released with three major enhancements:

- A user-friendly graphical user interface (GUI)

- Automated generation of partially correct answers with proportional score allocation

- Functionality to shuffle any multiple-choice (MCQ)-based exam

The third enhancement demonstrates strong potential for adaptation across disciplines, significantly broadening the tool’s applicability beyond programming courses. Instructors from any discipline can generate multiple versions of the same exam by automatically shuffling questions and associated answer choices, thereby supporting academic integrity, particularly in paper-based assessments.